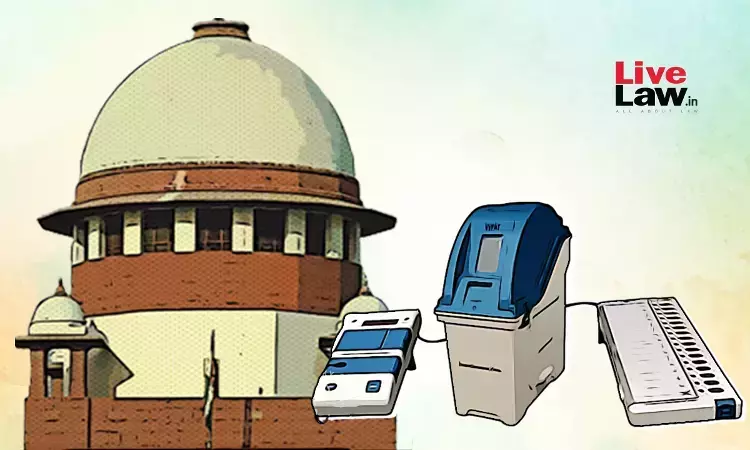

A Landmark Verdict Shapes Electoral Procedures

In a pivotal ruling, the Supreme Court, on April 26, dismissed petitions advocating for a 100% cross-verification of Electronic Voting Machines (EVMs) data with Voter Verifiable Paper Audit Trail (VVPAT) records. This decision, delivered by Justices Sa[……]