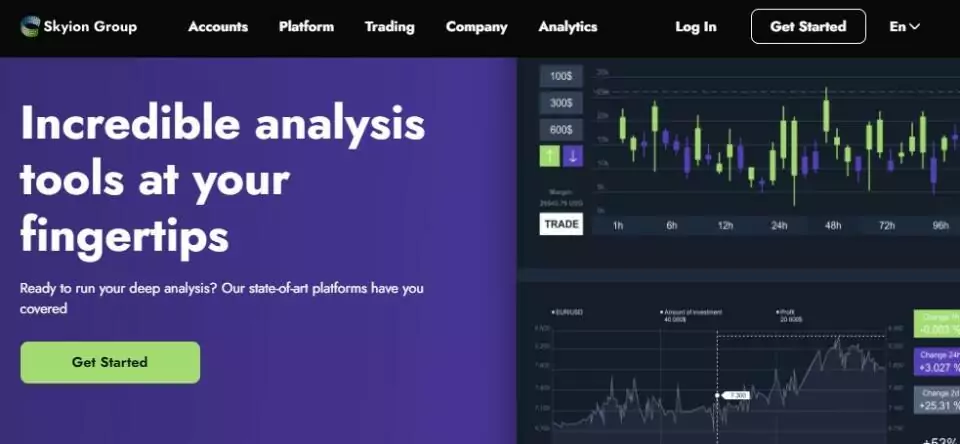

Exchanging can be truly risky as it accumulates monetary dangers, particularly when the market is unpredictable. Yet, don’t stress when you have Wiksons Group with you. It prescribes adhering to any created plan, avoiding thoughtless decisions out of fear or need, and keeping a coordinated p[……]

![Invest2see Review: Learn About Its Advanced Mobile Trading Platform [invest2see.com]](https://qrius.com/wp-content/uploads/cwv-webp-images/2024/04/unnamed.jpg.webp)

![Wiksons Group Review: Streamlining Trade Milestones [wiksonsg.com]](https://qrius.com/wp-content/uploads/cwv-webp-images/2024/04/Wiksons-Group-Review-226x150.jpg.webp)

![Wiksons Group Review: Streamlining Trade Milestones [wiksonsg.com]](https://qrius.com/wp-content/uploads/cwv-webp-images/2024/04/Wiksons-Group-Review.jpg.webp)